Production AI inference

on wylon

A high-performance LLM inference platform built for developers and enterprises.

Token Factory(public sign-up coming soon)

Pay-per-token inference for leading open-source LLMs. OpenAI- and Anthropic-compatible APIs, ready to use with your existing clients.

Supported model families: MiniMax · Kimi · GLM · Qwen · DeepSeek

MiniMax

Long-context, productivity-grade workloads — document processing, summarization, multi-turn business conversations.

Kimi

Solid on multimodal, ultra-long context, and code — a popular foundation for agents and developer tooling.

GLM

Strong on Chinese corpora — covers general chat, tool use, and agentic workflows.

Qwen

Full lineup from on-device tiny models to flagship MoEs, balancing performance and cost.

DeepSeek

Reputation for reasoning, code, and math — its MoE line offers excellent price-performance.

And more

See the full list, context lengths, and pricing in the model catalog and pricing pages.

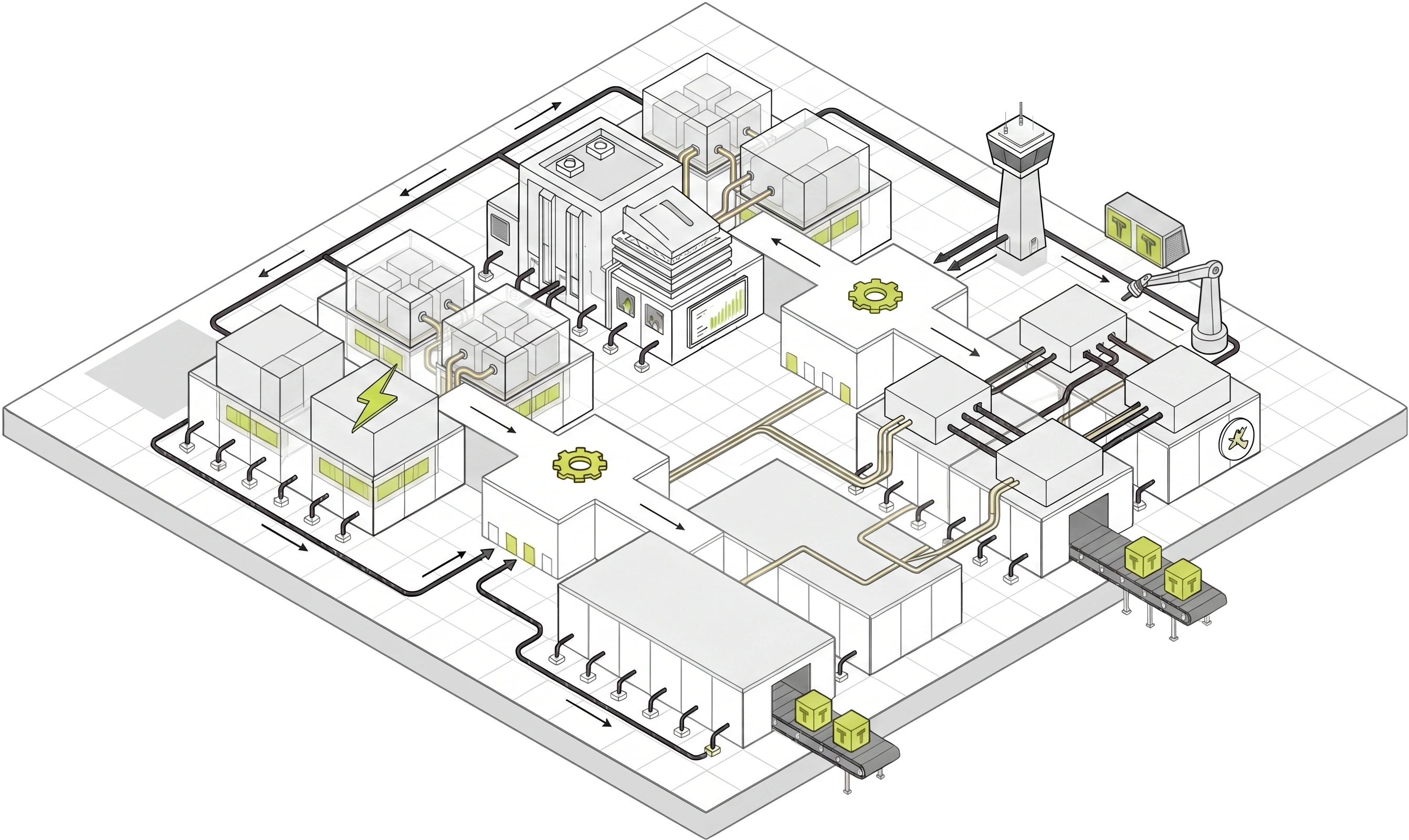

GPU Service(coming soon)

GPU compute across instances, bare-metal servers, and dedicated clusters. Now accepting early-access requests.

GPU Instance

On-demand single- or multi-GPU instance containers — provisioned in minutes, billed by the hour.

Bare-Metal

Dedicated GPU servers with full hardware control and resource isolation.

Cluster

Multi-node networked compute pool, sized for large-scale inference.

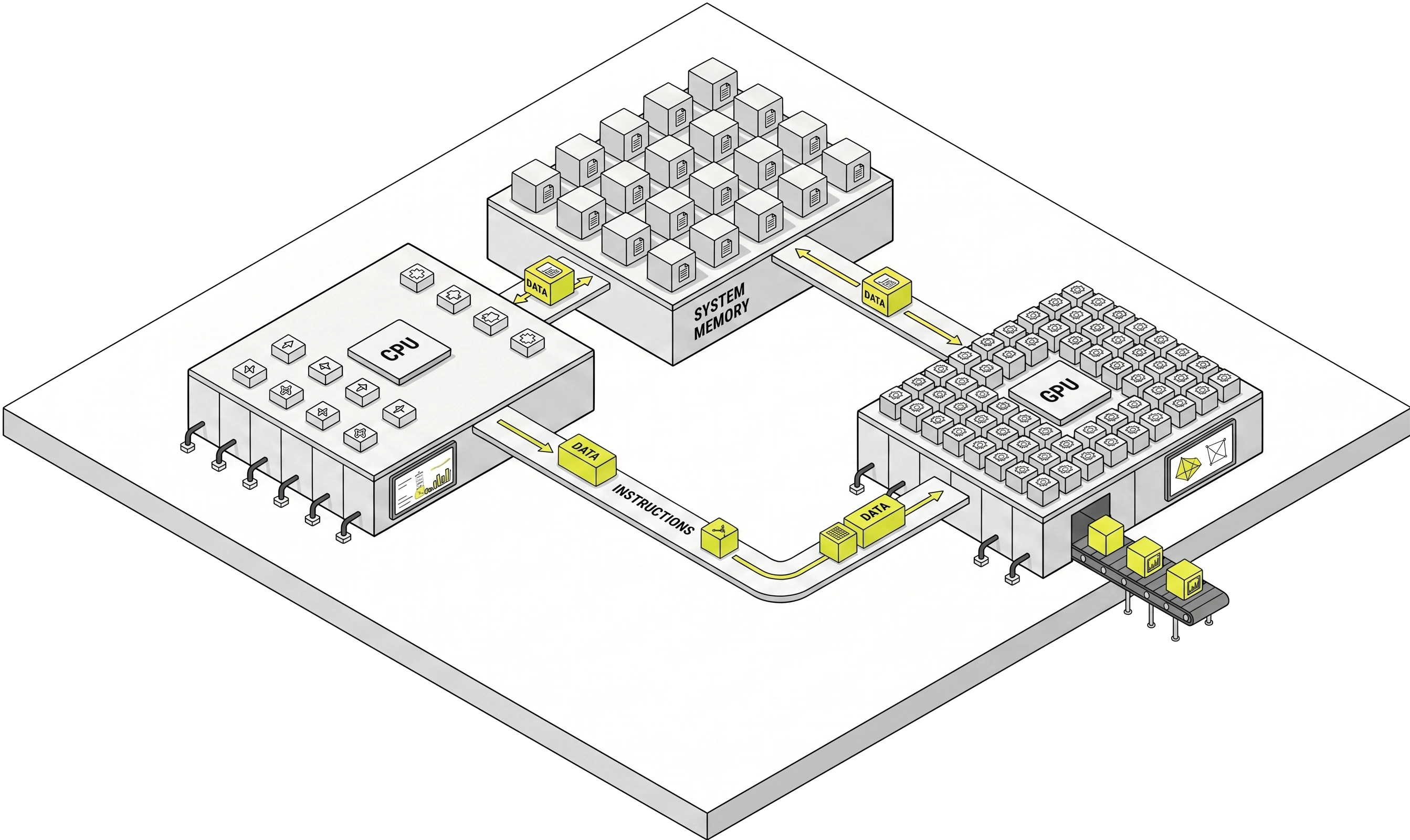

An end-to-end inference system

A super-node GPU architecture with system-level hardware-software co-design.

A vertically integrated Token Factory

Drop-in compatible API

OpenAI- and Anthropic-compatible API surface — set the base URL and you're done.

Full-stack observability

Track TTFT, TPS, cache-hit ratio, and per-token usage in real time.

System-level KV-cache acceleration

A dedicated KV-cache engine with hybrid tiering accelerates repeated-prefix and long-context inference.

Topology-aware MoE acceleration

The super-node architecture enables topology-aware scheduling for tensor, pipeline, and expert parallelism (TP/PP/EP).

Multi-node high availability

LLM-aware scheduling across an elastic GPU topology for higher availability and predictable scale.

Multi-vendor GPU infrastructure

Broad support across leading GPU vendors — Cambricon, Biren, Sunrise, MetaX, and more.

High-performance GPU interconnect topology

GPU communication topology co-designed with MoE parallelism for large-scale inference.

Energy-efficient inference design

The wylon super-node platform jointly optimizes scheduling, batching, and thermals to deliver high energy efficiency at scale.

Built for LLM inference workloads

Full-stack, hardware-software co-designed

End-to-end cloud infrastructure across GPU compatibility, super-node architecture, inference runtime, and API services — tightly integrated to reduce performance overhead.

Availability SLA

Token Factory delivers up to 99.9% availability, sized for production-grade traffic.

Ops overhead

Drop-in inference API — no infrastructure to manage.

Speedup

Backed by system-level caching, prefill is up to 10× faster than baseline implementations.

Chip families supported

Native compatibility across Biren, Cambricon, MetaX, Sunrise, and more.

Try the wylon Token Factory

Token Factory is open for sign-ups and can be integrated in minutes. GPU Service is accepting early-access applications.